Running multiple, remotely accessible and secure services from OpenMediaVault 5

August 22, 2020

I somewhat recently set up OpenMediaVault 5 on a NAS server I built. I used OMV Extras to setup Docker and Portainer and a couple services I wanted to be able to access from outside my local area network. There were a couple problems I had to solve to make this happen:

- Any resources exposed on the public internet need to be password protected but passwords transmitted across the internet using HTTP are insecure and easily stolen. Therefore we need SSL certificates (and don’t want to spend money to get them).

- My home’s connection to the internet gives us a single public IP address but we’d like to run multiple services on port the HTTPS port (443).

Luckily the Let’s Encrypt docker image gives us a way to solve both those problems.

1. Create docker containers for the services you want to expose

The details of getting OMV, Docker and some services running on Docker containers are beyond the scope of this post but there are plenty of other resoures available to help you figure that out.

In my case I had Airsonic and Nextcloud running locally but wanted to be able to access them from the internet.

2. Sign up for some free subdomains

There are several providers out there (Duck DNS, afraid.org, etc…) that allow you to sign up for a free account and get a subdomain (foo.duckdns.org) on their domain. We’ll need a subdomain per application we want to host so in this example we’ll grab two subdomains. We’ll pretend the subdomains are airsonic.ddns.provider and nextcloud.ddns.provider in all the examples in this blog post.

3. Forward ports 80 and 443 to your server

The Let’s Encrypt container we’re about to install needs to be able to accept inbound requests from the internet on ports 80 and 443. To do that you’ll need to forward those ports through your router and on to the OMV server. For the internal ports you can choose two random free ports on your server, they don’t need to be ports 80 and 443 if those are already used by other containers. If ports 80 and 443 are free on your OMV server it might be easiest to just use those though.

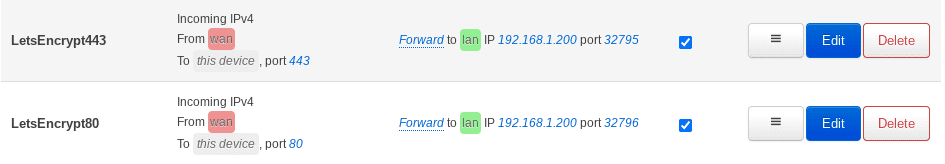

In this example we’ll forward the router’s port 80 -> port 32796 on the server (and then set up the network bridge so port 32796 on the server goes to port 80 on the Docker container). Similarly we’ll forward port 443 -> port 32975 (and then back to 443 via the network bridge).

Here’s what the entries look like on my OpenWRT router:

4. Install a container running Let’s Encrypt

In Portainer I’ve been using the app templates at https://raw.githubusercontent.com/Qballjos/portainer_templates/master/Template/template.json to install the programs I need. It also has a template for Let’s Encrypt which is a free program that lets you get a valid SSL certificate if you can prove you have control of a subdomain.

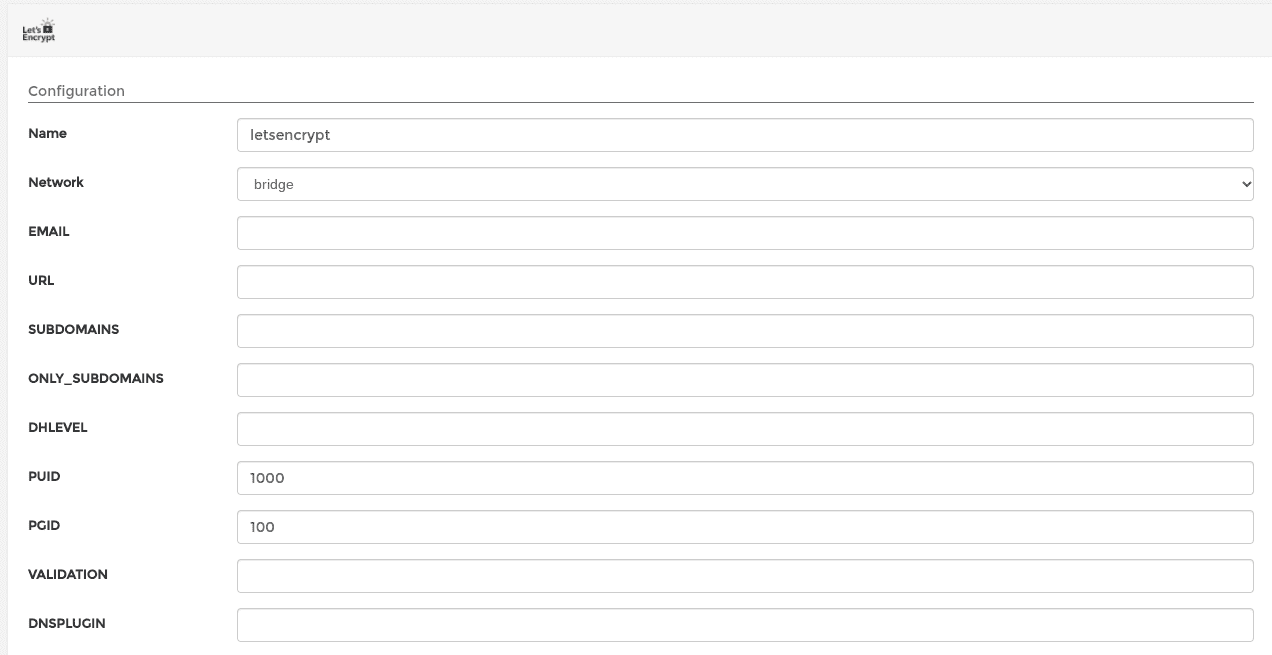

Before starting the deploy prcoess you’ll need to fill in the configuration section of the app template:

Here’s what to fill in:

EMAIL- Your email address. I didn’t see any emails sent to me after going through the process but my guess is Let’s Encrypt requires an email on file for the certificatesURL- Fill in the domain of the DDNS provider you signed up for. So in this caseddns.provider.SUBDOMAINS- A comma separated list of the subdomains. Again in this case that would beairsonic,nextcloud.ONLY_SUBDOMAINS- Set totrue. This just tells Let’s Encrypt we only want a certificate for the subdomains and not the entire domain.DHLEVEL- Seems like this is deprecated in later versions of the docker image but wasn’t in my version. You can just leave empty.VALIDATION- The validation method to use. We’ll usehttp.DNSPLUGIN- Can be left empty since we’re not using theDNSvalidation method.

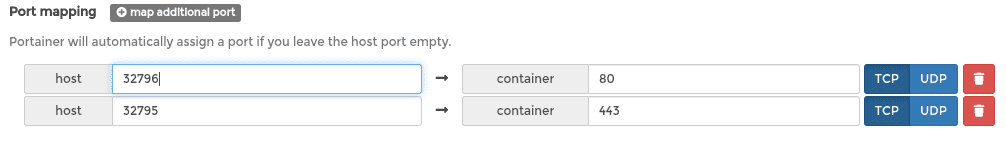

Next find the “Show advanced options” link and click on that. You should see a section for Port mappings. We need to assign the container’s ports 80 and 443 to the ports we forwarded the router’s ports 80 and 443 to. In section 3 I picked 32795 and 32796 so we’ll fill those in as the host ports:

Once that’s done you can go ahead and deploy the container. After it’s up and running we need to make sure getting the SSL certificates worked. SSH into your OMV box and run:

docker logs -f letencrypt (or whatever you named your container if you changed the default name)

You should see an IMPORTANT NOTES section and if things worked you should see a congratulations message that says your certificate and chain have been saved locally. If not check that your port forwarding was set up correctly.

5. Edit config files for Nextcloud (if setting up Nextcloud)

If one of the services you’re setting up is Nextcloud (as it was for me) you’ll need to edit a config file to tell Nextcloud the domain to use. This is specific to Nextcloud and won’t be required for other applications (although they may have their own configuration that needs to be done) but since I figure other people might hit the same issue I should go over what needs to be updated.

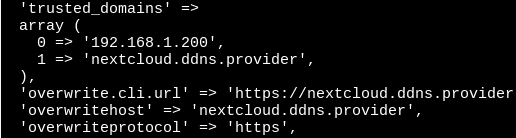

Get a console on the nextcloud container (make sure it’s not the nextcloud db container) and open the /config/www/nextcloud/config/config.php file for editing.

You’ll need to make sure your domain is listed in the trusted_domains array, you have overwrite.cli.url and overwritehost entries that use your domain and that overwriteprotocol is set to https. For our example this would look like:

6. Forward incoming requests to other Docker containers

The Let’s Encrypt image includes Nginx which we can use as a reverse proxy to forward incoming requests to different docker images based on the server name that was used for the request.

Open up a console on the Let’s Encrypt container and navigate to /config/nginx/proxy-confs. Here you’ll find a WHOLE bunch of sample proxy configs for different applications.

The two we’ll want to use are airsonic.subdomain.conf.sample and nextcloud.subdomain.conf.sample. To start, make a copies or rename the files to airsonic.subdomain.conf and nextcloud.subdomain.conf. Then open the files for editing and find the config line that defines server_name. For each file fill in the subdomain you want associated with application. So, for example, in airsonic.subdomain.conf we’ll set:

server_name airsonic.ddns.provider;

This is the magic that allows us to use the same server and port for different requests and route them to different Docker containers based on the server name used in the request!

You can look over the rest of the config to see what it’s doing but I didn’t find I needed to change anything.

After you’ve edited both files restart your Let’s Encrypt container so it picks up the new nginx config files.

At this point I thought I was done but found things weren’t actually working. I think what was happening was nginx was using Docker’s DNS to try to look up the other containers (so it could forward along the request) but the containers that were to receive the requests weren’t on a network with the Let’s Encrypt container and it couldn’t find them. To fix that in each case we’ll have to make sure the containers share a common network.

The Nextcloud containers already had already created a nextcloud_default network (I think for communication between the nextcloud and nextcloud_db containers) so I went into Portainer, found the letencrypt container, clicked on it and scrolled down to the bottom where there is a “Connected Networks” section:

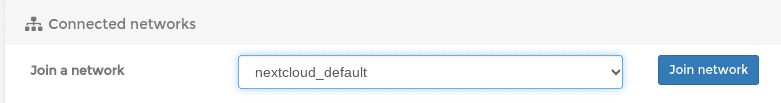

There’s a “Join a network” section you can use to put the letencrypt container on that network as well:

Once you hit the button you should see the network show up below.

Airsonic didn’t already have it’s own network (the only network that showed up in the “Connected networks” section of that container was the bridge to the host). To make sure Let’s Encrypt could talk to Airsonic I had to create a new network in Portainer.

There’s a “Networks” nav item on the left and inside that a “+Add Network” button. After clicking that you’ll be presented with a ton of configuration options. You don’t have to change any of them though. You can just fill in a descriptive network name and click “Create the nework” button at the bottom.

Then you have to visit both the airsonic and letsencrypt containers and add them to the new network you just created.

Once that’s all done you should be good to go! Browse to http://airsonic.ddns.provider and http://nextcloud.ddns.provider and verify things are being routed correctly.